Even as Google releases the Gemma 4, its open large language models, there is a short historical significance to it. These models find the same technological and research foundations as the Gemini 3 Pro models, released late last year, and marked a big step forward for proprietary large language models. Gemma 4 brings those improvements for open models, with a focus on advanced reasoning and agentic workflows. It is natively trained on over 140 languages.

Gemma 4, with four being the generation reference in the name, is available in four model sizes as well. There’s the Effective 2B (E2B), Effective 4B (E4B), 26B Mixture of Experts (MoE), and 31B Dense. The 26B and 31B models are geared for frontier intelligence, with offline compute on personal systems. The smaller parameter size E2B and E4B models are more geared for smartphones, mobile devices, and internet of things (IoT) ecosystems, as well as edge devices, including the Raspberry Pi, and Nvidia’s Jetson.

In case you are wondering how Gemma is different from Gemini, the key difference is that Gemma is an AI processing engine, and not a chatbot-esque implementation. While the underlying technology is largely similar, Gemma is an open model that can be downloaded and run locally for free. It also brings much more flexibility to modify, fine-tune, and customise, depending on specific workflows and computing needs. The fact that these models can be run locally and not on the cloud also has the benefits of data privacy and cost, for many applications.

The convenience and flexibility extend across personal, commercial, and enterprise usage.

Google has worked closely with partners, including Qualcomm, MediaTek, and Nvidia, for these models. The new models are released under the Apache 2.0 licence, which means offering significant freedom for developers, researchers, and commercial entities to use, modify, fine-tune, and redistribute the models with minimal restrictions. Previously, that flexibility was comparatively limited.

“Purpose-built for advanced reasoning and agentic workflows, Gemma 4 delivers an unprecedented level of intelligence-per-parameter. This breakthrough builds on incredible community momentum: since the launch of our first generation, developers have downloaded Gemma over 400 million times, building a vibrant Gemmaverse of more than 100,000 variants,” says Clement Farabet, Vice President of Research at Google DeepMind.

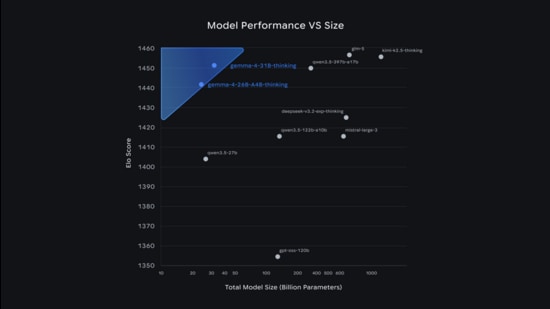

Arena AI, a public, web-based platform that evaluates large language models (LLMs), ranks Gemma 4’s 31 billion parameter model at number three (score of 1452), behind GLM-5 (1456 score) and Kimi K2.5 Thinking 1453 score), while the 26 billion parameter model ranks sixth (1441 score). GLM-5 is made by the Chinese AI company Z.ai, while the Kimi models are developed by the Chinese company Moonshot AI. OpenAI’s gpt-oss-20b open-weight language model, released in August, scores 1318.

Farabet notes that Gemma 4 outcompetes models 20x its size. Gemma 4 is expected to solve a wide variety of generative AI tasks with text, audio, and image input, support for over 140 languages, and a long 128K and up to 256K context window. The 31B and 26B parameter models are designed for higher-end servers with powerful GPUs, including Nvidia’s H100.

“The 26B and 31B models are designed for high-performance reasoning and developer-centric workflows, making them well-suited for agentic AI. Optimized to deliver state-of-the-art, accessible reasoning, these models run efficiently on NVIDIA RTX GPUs and DGX Spark — powering development environments, coding assistants, and agent-driven workflows,” notes Michael Fukuyama, Product Manager at Nvidia.

The 2 billion and 4 billion parameter footprint of the E2B and E4B models will be crucial for Gemma 4’s utility on mobile devices, with optimisations to preserve RAM and battery life. Nvidia confirms that the Jetson Orin Nano supports the Gemma 4 e2b and e4b variants, enabling multimodal inference on small, embedded, and power-constrained systems, with the same model family scaling across the Jetson platform up to Jetson Thor.